How CISOs Can Lead the Responsible AI ChargeHow CISOs Can Lead the Responsible AI Charge

CISOs understand the risk scenarios that can help create safeguards so everyone can use AI safely and focus on the technology's promises and opportunities.

_Andreas_Prott_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)

COMMENTARY

No one wants to miss the artificial intelligence (AI) wave, but the "fear of missing out" has leaders poised to step onto an already fast-moving train where the risks can outweigh the rewards. A PwC survey highlighted a stark reality: 40% of global leaders don't understand the cyber-risks of generative AI (GenAI), despite their enthusiasm for the emerging technology. This is a red flag that could expose companies to security risks from negligent AI adoption. This is precisely why a chief information security officer (CISO) should lead in AI technology evaluation, implementation, and governance. CISOs understand the risk scenarios that can help create safeguards so everyone can use the technology safely and focus more on AI's promises and opportunities.

The AI Journey Starts With a CISO

Embarking on the AI journey can be daunting without clear guidelines, and many organizations are uncertain about which C-suite executive should lead the AI strategy. Although having a dedicated chief AI officer (CAIO) is one approach, the fundamental issue remains that integrating any new technology inherently involves security considerations.

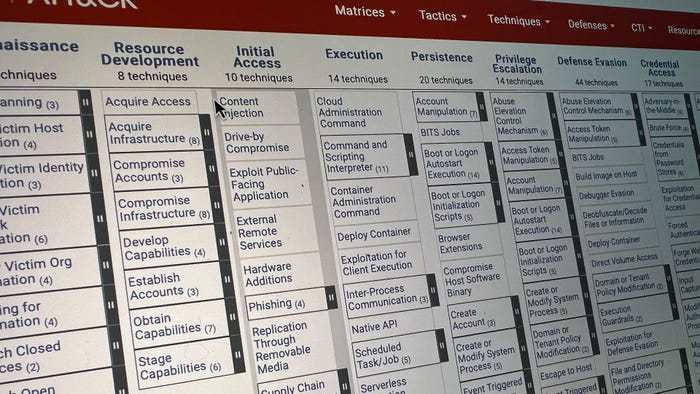

The rise of AI is bringing security expertise to the forefront for organizationwide security and compliance. CISOs are critical to navigating the complex AI landscape among emerging new regulations and executive orders to ensure privacy, security, and risk management. As a first step to an organization's AI journey, the CISOs are responsible for implementing a security-first approach to AI and establishing a proper risk management strategy via policy and tools. This strategy should include:

Aligning AI goals: Establish an AI consortium to align stakeholders and the adoption goals with your organization's risk tolerance and strategic objectives to avoid rogue adoption.

Collaborating with cybersecurity teams: Partner with cybersecurity experts to build a robust risk evaluation framework.

Creating security-forward guardrails: Implement safeguards to protect intellectual property, customer and internal data, and other critical assets against cyber threats.

Determining Acceptable Risk

Although AI has plenty of promise for organizations, rapid and unrestrained GenAI deployment can lead to issues like product sprawl and data mismanagement. Preventing the risk associated with these problems requires aligning the organization's AI adoption efforts.

CISOs ultimately set the security agenda with other leaders, like chief technology officers, to address knowledge gaps and ensure the entire business is aligned on the strategy to manage governance, risk, and compliance. CISOs are responsible for the entire spectrum of AI adoption — from securing AI consumption (i.e., employees using ChatGPT) to building AI solutions. To help determine acceptable risk for their organization, CISOs can establish an AI consortium with key stakeholders that work cross-functionally to surface risks associated with the development or consumption of GenAI capabilities, establish acceptable risk tolerances, and act as a shared enforcement arm to maintain appropriate controls on the proliferation of AI use.

Suppose the organization is focused on securing AI consumption. In that case, the CISO must determine how employees can and cannot use the technology, which can be whitelisted or blacklisted or more granularly managed with products like Harmonic Security that enable a risk-managed adoption of SaaS-delivered GenAI tech. On the other hand, if the organization is building AI solutions, CISOs must develop a framework for how the technology will work. In either case, CISOs must have a pulse on AI developments to recognize the potential risks and stack projects with the right resources and experts for responsible adoption.

Locking in Your Security Foundation

Since CISOs have a security background, they can implement a robust security foundation for AI adoption that proactively manages risk and establishes the correct barriers to prevent breakdowns from cyber threats. CISOs bridge the collaboration of cybersecurity and information teams with business units to stay informed about threats, industry standards, and regulations like the EU AI Act.

In other words, CISOs and their security teams establish comprehensive guardrails, from assets management to robust encryption techniques, to be the backbone of secure AI integration. They protect intellectual property, customer and internal data, and other vital assets. It also ensures a broad spectrum of security monitoring, from rigorous personnel security checks and ongoing training to robust encryption techniques, to respond promptly and effectively to potential security incidents.

Remaining vigilant about the evolving security landscape is essential as AI becomes mainstream. By seamlessly integrating security into every step of the AI life cycle, organizations can be proactive against the rising use of GenAI for social engineering attacks, making distinguishing between genuine and malicious content harder. Additionally, bad actors are leveraging GenAI to create vulnerabilities and accelerate the discovery of weaknesses in defenses. To address these challenges, CISOs must be diligent by continuing to invest in preventative and detective controls and considering new ways to disseminate awareness among the workforces.

Final Thoughts

AI will touch every business function, even in ways that have yet to be predicted. As the bridge between security efforts and business goals, CISOs serve as gatekeepers for quality control and responsible AI use across the business. They can articulate the necessary ground for security integrations that avoid missteps in AI adoption and enable businesses to unlock AI's full potential to drive better, more informed business outcomes.

Read more about:

CISO CornerAbout the Author

You May Also Like